You can configure the scraper by choosing start URLs in the website/category URLs input field. The default setting is configured this way: ",Īssuming you want to explore the other options available, let’s go through the different options step by step: 1. You can test the scraper by using the default inputs.

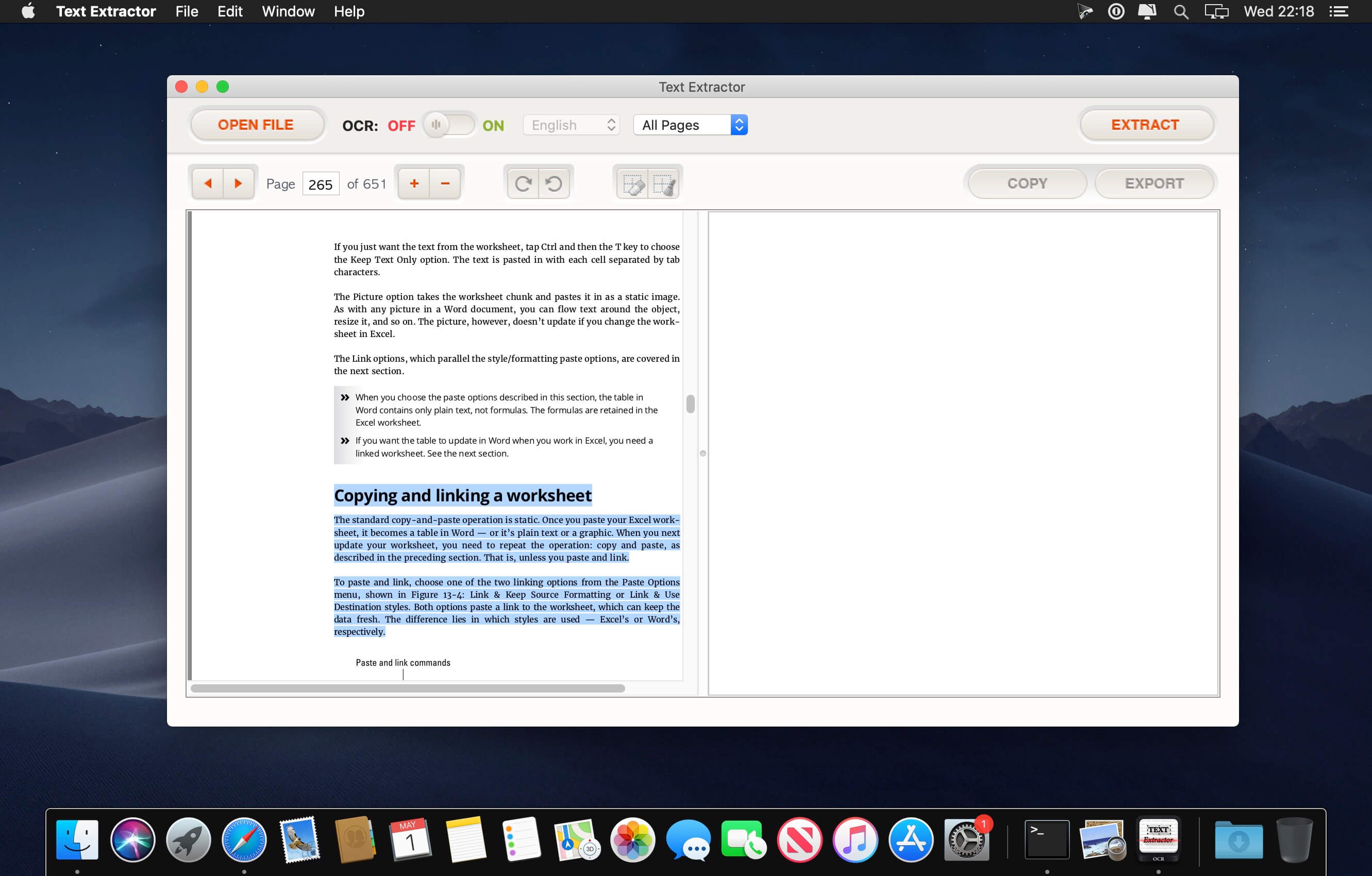

We’ll show you how to scrape text from a website with Smart Article Extractor. Step-by-step guide to scraping text from a website To learn more about the legal side of web scraping, read our blog post on the subject ➜ Just ensure not to accumulate sensitive information such as personal data or copyrighted content, which are protected by various international regulations. Scraping data available online for everyone to see is legal since it merely automates a task that a human would have to do manually. You can configure it for your purposes, and extract information from news websites based on the publication date, word count, pseudo URLs, and more. This scraper crawls a whole website and automatically distinguishes articles from other web pages. How can you scrape text from a URL?Ī great tool to scrape text from a URL is Smart Article Extractor. Both are computational methods involving automated web crawling and scraping to search, retrieve, and analyze text data. While text analysis grew from the field of humanities in the form of manual analysis, text mining and text analysis are now synonymous. You can discover hitherto unknown information by extracting such data from online resources. It is similar to text analytics, as it involves deriving quality information from extant text data. Text mining, also known as text data mining, is the next step after text scraping. Download your data as JSON, HTML table, Excel, RSS feed, and more. The extractor crawls the whole website and automatically distinguishes articles from other web pages. This piece of code is licensed under The MIT License.Smart Article Extractor extracts articles from any scientific, academic, or news website. Valid domain name and p is valid sub-domain. name is valid TLD and urlextract just see that there is bold.name If this HTML snippet is on the input of urlextract.find_urls() it will return p.bold.name as an URL.īehavior of urlextract is correct, because. The false match can occur for example in css or JS when you are referring to HTML itemĮxample HTML code: Jan p. Since TLD can be not only shortcut but also some meaningful word we might see “false matches” when we are searchingįor URL in some HTML pages. update_when_older ( 7 ) # updates when list is older that 7 days Known issues Or update_when_older() method: from urlextract import URLExtract extractor = URLExtract () extractor. If you want to have up to date list of TLDs you can use update(): from urlextract import URLExtract extractor = URLExtract () extractor.

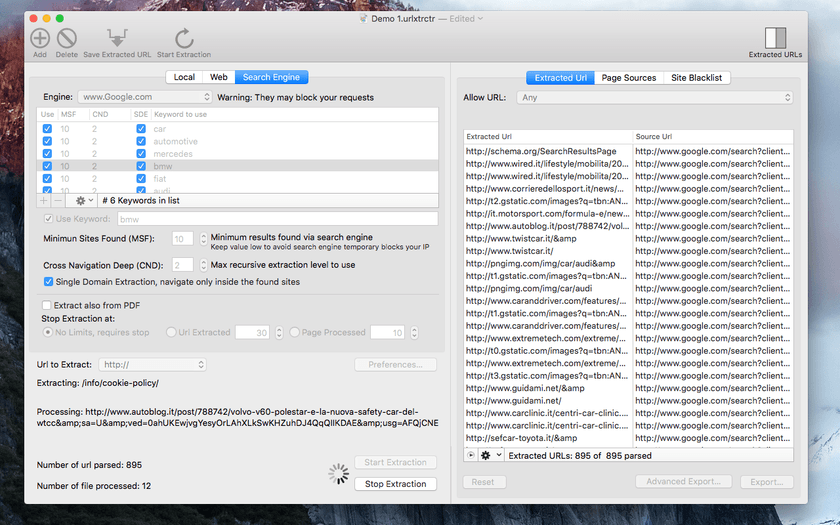

has_urls ( example_text ): print ( "Given text contains some URL" ) Let's have URL as an example." if extractor. Or if you want to just check if there is at least one URL you can do: from urlextract import URLExtract extractor = URLExtract () example_text = "Text with URLs. gen_urls ( example_text ): print ( url ) # prints: Let's have URL as an example." for url in extractor. Or you can get generator over URLs in text by: from urlextract import URLExtract extractor = URLExtract () example_text = "Text with URLs. Let's have URL as an example." ) print ( urls ) # prints: You can look at command line program at the end of urlextract.py.īut everything you need to know is this: from urlextract import URLExtract extractor = URLExtract () urls = extractor. Or you can install the requirements with requirements.txt: pip install -r requirements.txt Run tox Platformdirs for determining user’s cache directoryĭnspython to cache DNS results pip install idna Online documentation is published at Requirements Package is available on PyPI - you can install it via pip. NOTE: List of TLDs is downloaded from to keep you up to date with new TLDs. Starts from that position to expand boundaries to both sides searchingįor “stop character” (usually whitespace, comma, single or doubleĪ dns check option is available to also reject invalid domain names. It tries to find any occurrence of TLD in given text. URLExtract is python class for collecting (extracting) URLs from given

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed